Creating a fraud detection system for the 21st century

Amazon's Decision Support Engine Operations Content Moderation Platform

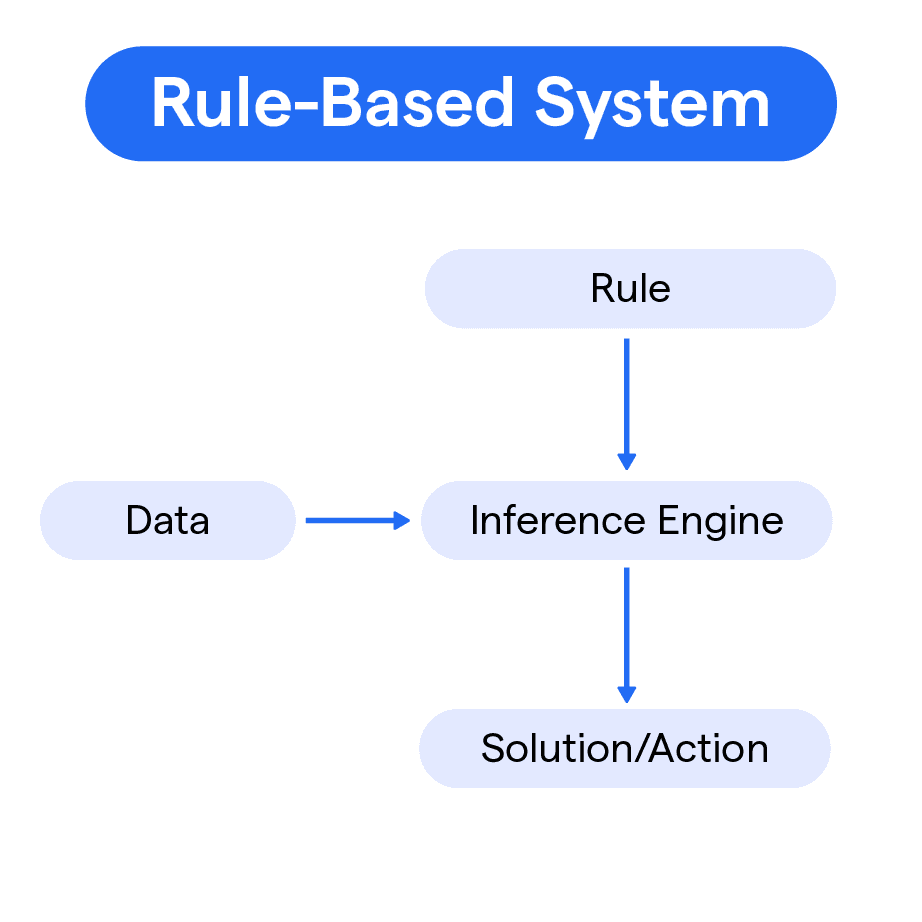

The Decision Support Engine (DSE) is a powerful tool for visualizing and exploring

decision trees. The DSE framework uses components as the core building block for generating rules-based

code to inform the platform, ML, AI, and manual reviews.

It helps users visualize and

explore the code trees, and can be used for understanding existing rules and diagnosing rule issues. Because it is a componentized framework, it can be structured to use for all business needs. Amazon Prime and Amazon Books have similar and different needs that can be configured on the platform to customized at the business need levels. Both may require Image Fingerprinting, and may have a variety of different configurations to target the fraud or trust issues systemically.

This also allows for improving and updating the DSE as trends and issues emerge. The goal is to catch fraud as soon as possible, which means before it reaches a customer's view. Historically, most fraud is only detected after it is available on the public-facing domains of Amazon and its businesses.

I created a new, robust suite of fraud and abuse moderation tools for content on

Amazon's platform from 2021 - 2023 using Machine Learning and AI tools.

C4A DSE Decision Support Engine End-to-End UX Flow summary

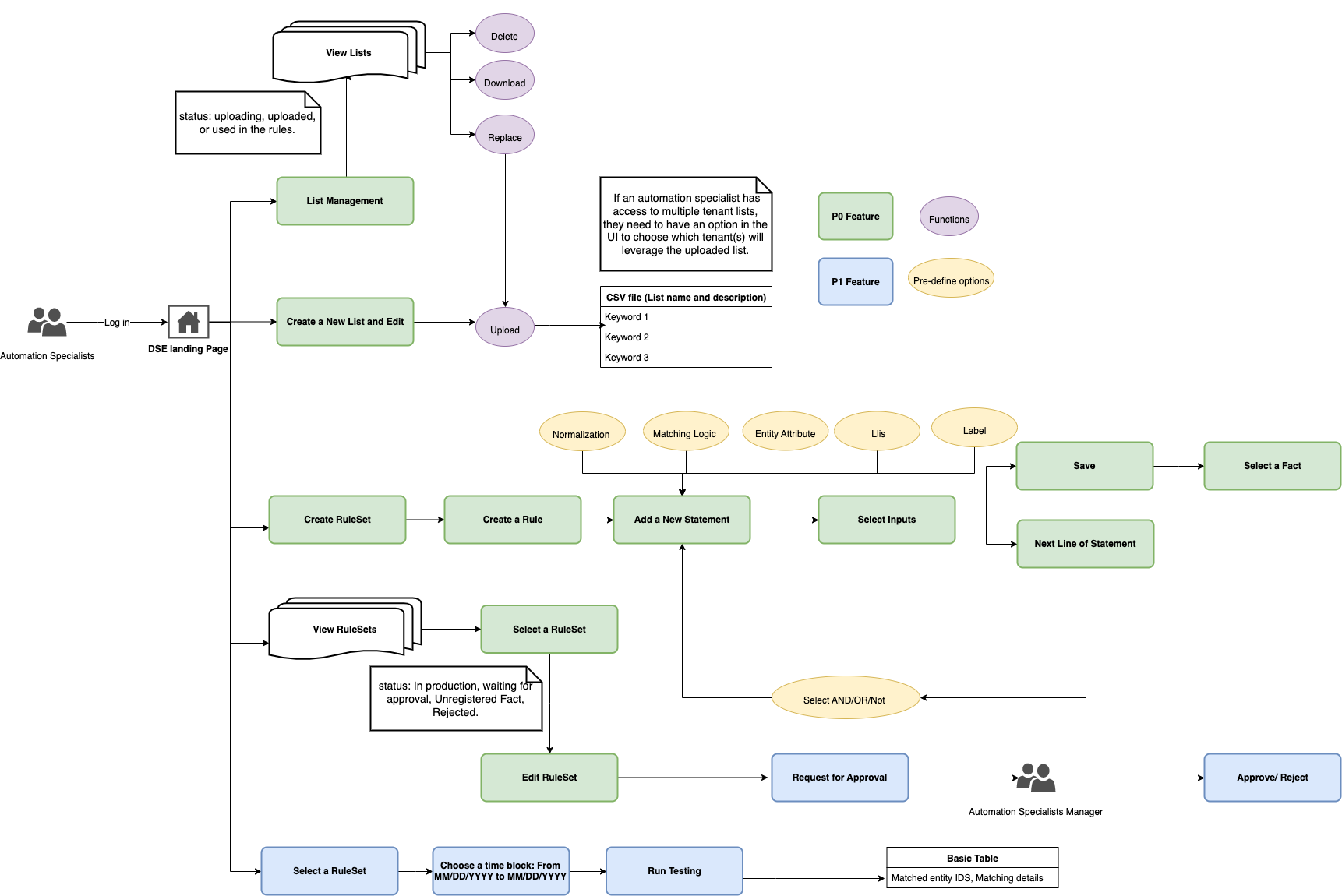

With the new C4A Decision Support Engine, we have the opportunity to revisit the user experience for our Operations and Automations teams. To better support the C4A vision and onboard Vella Discussions, DSE will also be a stand-alone decision engine with the following workflow:

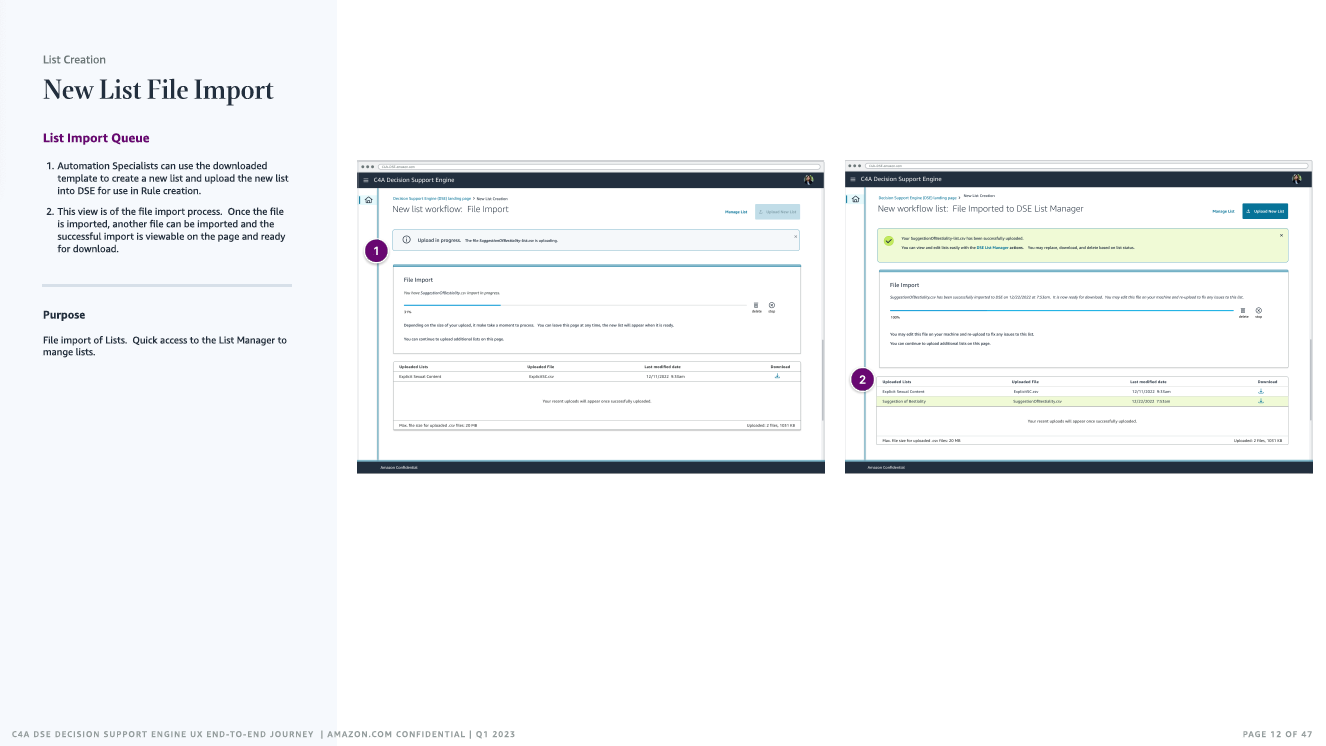

- Lists Creation: A workflow for structured and unordered data set that can be matched against input data. In C4A, a List only has one column.

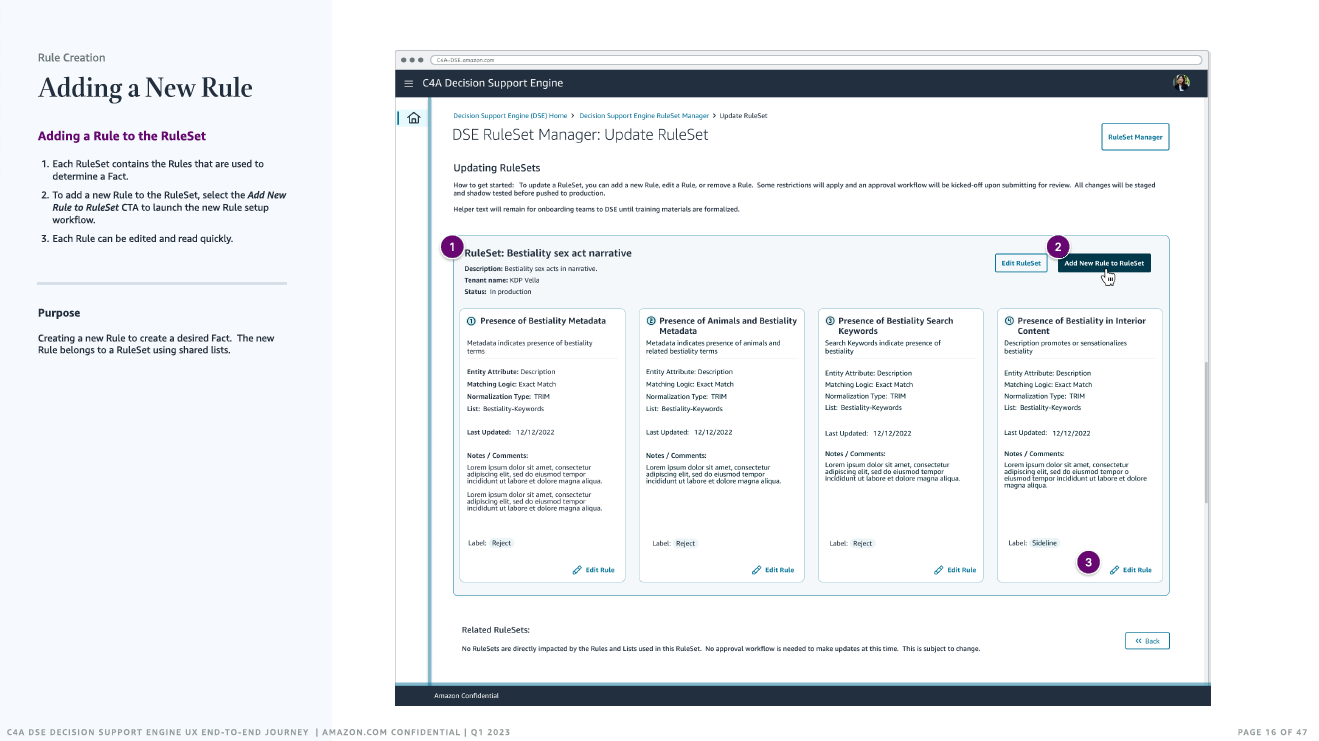

- Rule Creation: A workflow to create a set of properties that define the matching criteria.

- RuleSet Management: A workflow to create a group of rules associated with a single Fact. RuleSet must include at least one rule.

- Lists Management: Change management for Lists with a one-to-many mapped complexity.

- Approval Management: A workflow for managing approvals. Because changes in DSE will not be attached to the policy workflow on submit; its status is pending for an Automation Manager (Approver) to approve the Fact. The Manager will test the RuleSets with associated Lists and test the Rules by running Amazon QuickSight on the data repository. This ensure no untested changes are pushed to Production.

Product Design, UX Strategy, and the User Experience

Using ML, AI to automate content moderation and compliance of all user-generated content (UGC) for all of Amazon's businesses and platforms.

Catching all fraud types at scale, with speed and accuracy to reduce human moderator's workload:

- Modern, versatile UI modular layout and customizable UX per business and content needs.

- Flexible, adaptable products to meet new and future content types for any business need.

- Harmful Actors Profiling for faster response and informing AI to catch fraud faster.

- Quick, accurate image fingerprinting, content scanning, and profiling to catch and prevent harm before it is published to Amazon.

- Adaptable decision support matrix to automate decisions for speed and accuracy.

- Brand safety protocols to meet internal and external standards, practices, and regulations.

- Easy logging, diagnostics, and repairs for any system issues for real-time moderation.

Project Role

Working with Software Engineering, User Research, Amazon Worldwide Operations, and Product Management to design a suite of Content Moderation.

- Product Design, Production Design, Project Management

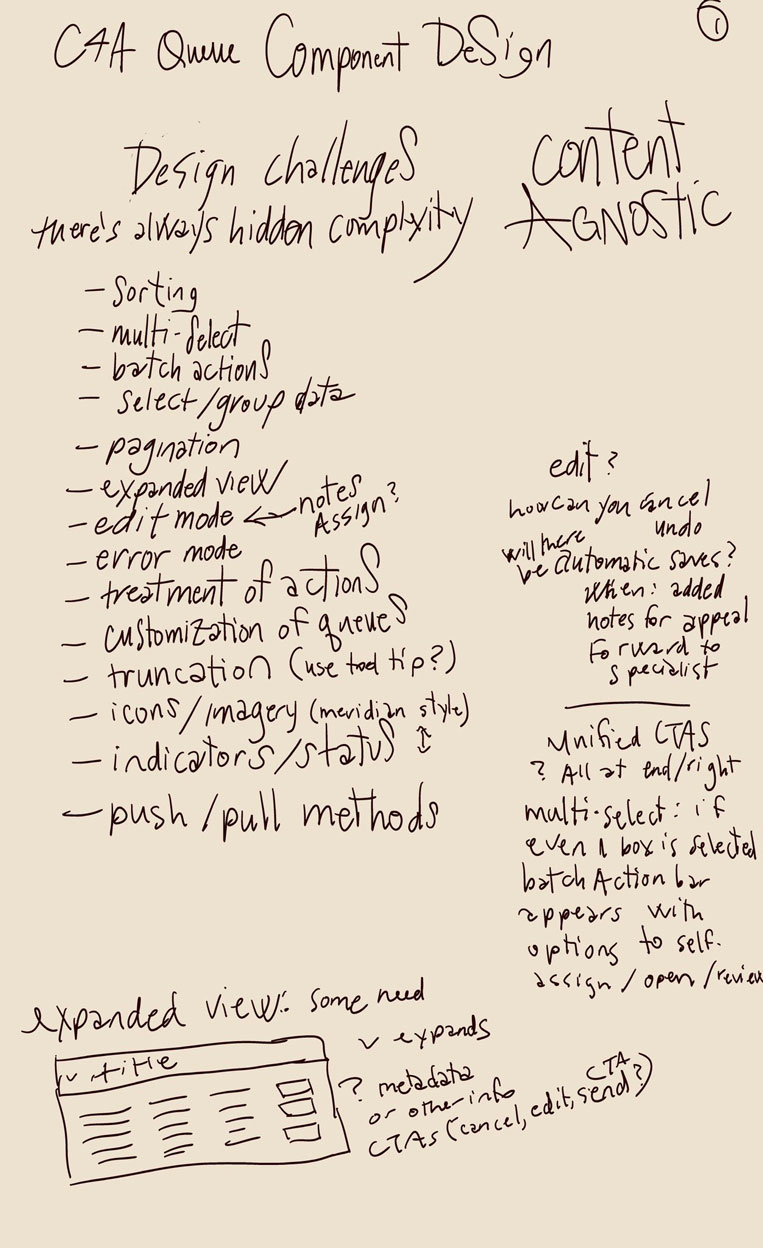

- Visual Design + Wireframing

- Design Systems

- Information Architecture

- UX Design

- UX User Research + User Studies: Internal and External Users

- DX/UX/UI Prototyping in Figma and React

- UX Design Hand-off

General Role Responsibilities

- Facilitate discussions and resolve highly-debated topics using presentations and proposals with various C-level technical stakeholders and other high-level internal stakeholders

- Lead teams through a human-centered design process; inspiring and directing all aspects of project work and deliverables

- Planning and scoping projects for teams according to business needs and design opportunities, setting teams up for success with an opinionated view on best practices

- Balancing multiple teams autonomy and guidance through harmonious collaboration and teams engagement

- Rapidly iterate and prototype design concepts, lead and guide best practices for designers and developers

- Work as a collaborative team member with design and engineering colleagues, product managers, and operations.

- Structure and facilitate ideation sessions with various technical and non-technical stakeholders

- Help product managers assess and prioritize opportunities and constraints, with clear, candid communication

Challenge

Create an experience to support new and rapidly evolving risk and policy needs.

Project Goals

Design a successful product .... Design high-fidelity prototypes for integration of this product.

Key Deliverables

- Product design for Decision Support Engine, Content Moderation Suite of products and prototyping tools for integration into business' workflows

- Decision Support Engine Design System, Design icons, symbols for tools and product integration into the new Content Moderation Suite

- Create prototypes and a research plan for new product design and workflow: Discovery, Onboarding, User Flow, User Testing, User Journey

- Design a new product to visualize the rules-based implementation in the new Content Moderation framework focusing on the Decision Support Engine

- Determine the critical features needed to resolve runtime UI issues caused within Decision Support Engine.

- Anticipate and identify usability issues using the Decision Support Engine

- Design a cohesive suite of tools for troubleshooting and observing the Decision Support Engine, focusing on resolving and improving upon legacy workflow issues

Types of Content Moderation

- Pre-moderation: content requires a review and approval before being published. This is the best safeguard against legal or fraudulent risk but can be a time-consuming and laborious process that can delay the appearance of content, product displays, reviews or comments and, in turn, prove frustrating for users and reduce the time to market.

- Post moderation: content immediately goes live but is queued for review and any items that do not meet guidelines are removed. It is difficult moderating large volumes of material and increases the risk of inappropriate content being missed.

- Reactive moderation: users are asked to ‘flag’ any offensive or questionable material for moderator review. Because of Amazon's volume, relying only on this isn't cost-effective and can impact the brand reputation. It relies on a proactive audience and can heighten the risk of inappropriate material going undetected. Many tickets are filed through Customer Service representatives and other forums for human review.

- Distributed moderation: Amazon's online community is invited to rate or ‘star’ on published content, with material that does not meet a certain standard removed. Amazon uses this method for Kindle Vella, giving the Authors the ability interact directly with their audience and to moderate their Readers' posts.

- Automated moderation: utilizing ML, AI, and digital tools like Amazon's Rekognition to detect and block inappropriate content before it goes live, along with blocking known account IDs, customer IDs, and IP addresses of users already determined to be abusive. It serves as a first pass and is often used in post moderation. The absence of human analysis and interpretation can lead to worthy content being rejected and vice versa. The new Content Moderation framework I've designed combines the best of automated moderation with human review, with 98-99% of the content passing an error-free automated review with nuanced accuracy without need for additional human review.

User Research

In the August 2021 survey, 100% of the users surveyed had experience with the legacy platform

and its specific issues. The common issues were with tooling (100%), visual differences - no image fingerprinting (100%), and

dependency on antiquated tools from many different platforms that require manual input without tracking nor cohesion (100%).

Operations staff and content moderation reviewers have issues with the UI layout of more than 10 different legacy

systems and need a way to visualize and resolve tasks quickly with factual data and comparing content to ensure they are or

aren't compliant.

Research, analyzing data, reviewing the top issues on all popular platforms where users seek help or file issues, and asking why the issue is common and what is the root cause of the relationship of the code, framework paradigms,and UI helped me understand the major frictions points and how to design for them. Usability testing, interviewing, observing and engaging in social outreach honed the solution direction.

Timeline

Within six weeks, launch an MVP of a text-review and test the ML automation's accuracy.

Within twelve weeks, launch the new design system for a Content Moderation UX/UI for a modular,

component-based platform.

Within twelve weeks, launch an MLP of a text-review and test the ML automation.

Within six weeks, launch the new design for a Decision Support Engine for Content Moderation for any

content type and compliance need as a modular, component-based platform.

Content Moderation UX/UI Design

The first reveal of the Content Moderation UX/UI prototype was created within a three week timetable.

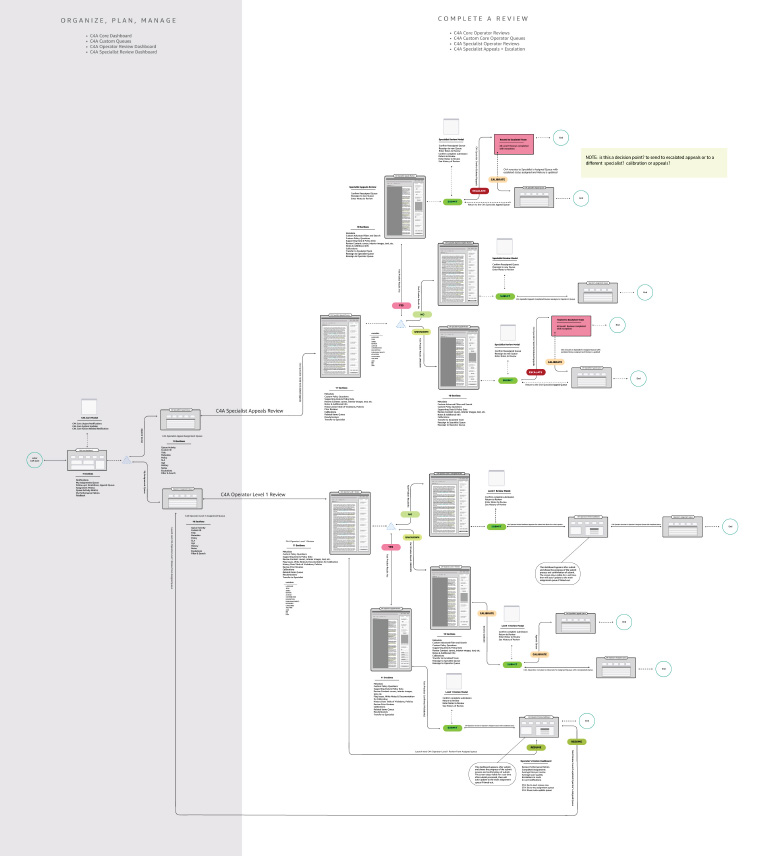

Workflow

The typical Content Moderator Operator experience can be frustrating to resolve tasks

efficiently . The typical workflow for resolving these has many opportunities for helping the user understand the paradigms

that are creating this frustrating issue.

My role was to identify the blockers and needs of the Developer/User within

the workflow and within the paradigms of the new Content Moderation Decision Support Engine. I designed the Operator's portal

to visualize, contextualize the content to quickly to make decisions on content, users, and account holders.

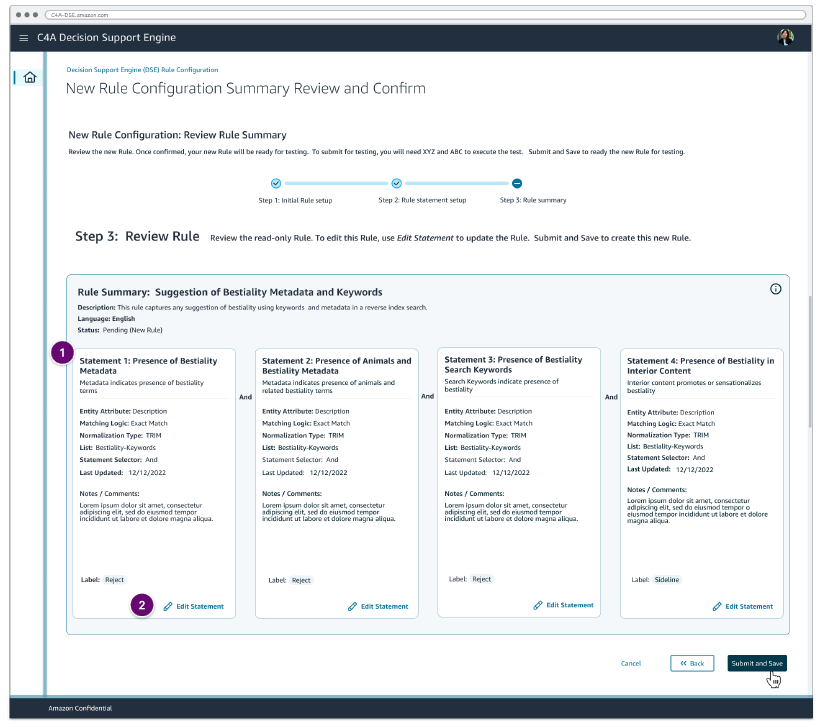

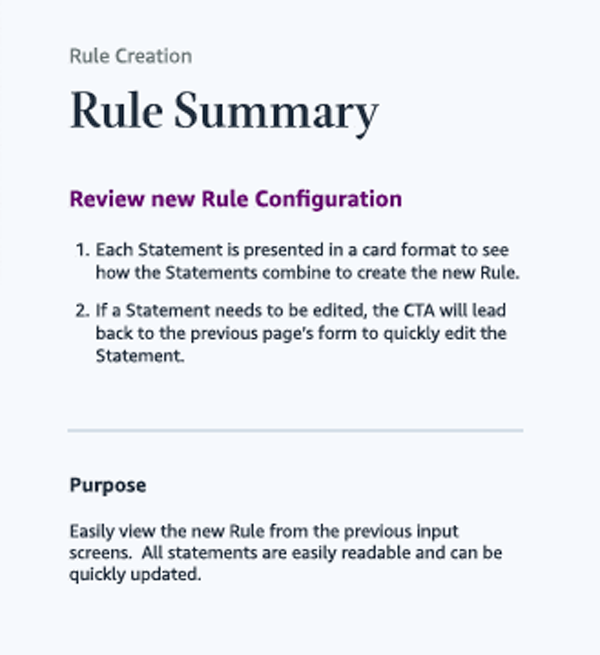

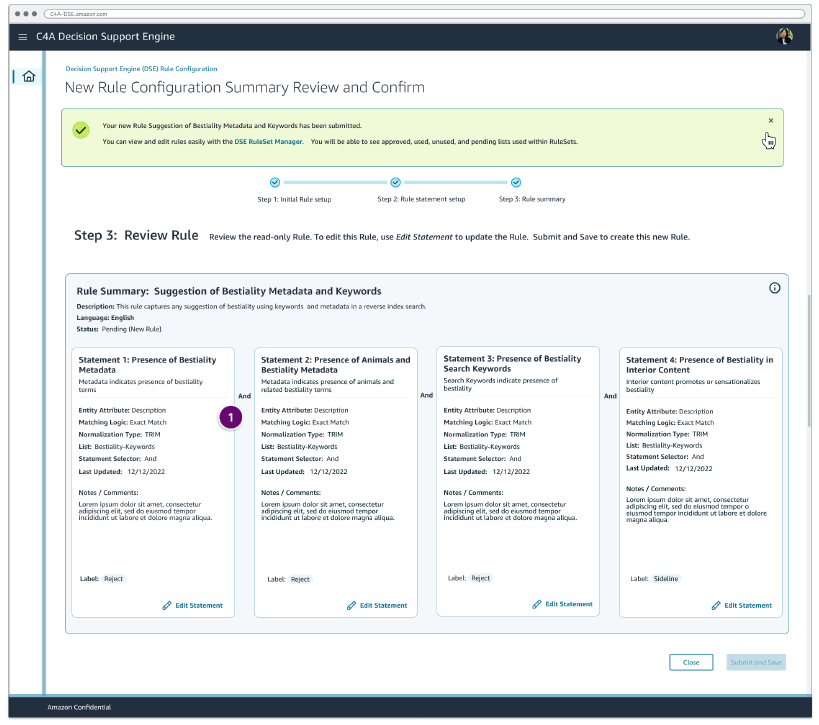

Rule Making

Decision Support Engine Rule Creation

Designing the Decision Support Engine for visualizing how rules can be combined to create fact-based decisions.

Automation specialists can choose the predefined input (list of fields/entity attributes such as first name and last name), a List that includes keywords, an option for normalization, selected Rules, additional context (notes), pre-defined labels, and the output of Rules from a given option.

Decision Support Engine What to know about Rule Creation:

- Rules are created within RuleSets

- Rules must be mapped to Lists

- Rules are run in RuleSets, so changes to Rules must be managed at the RuleSet Manager

- Please note that "input" represents the tenants' ingested data and has a predefined option per tenant-these options will be unique per tenant and entity.

I explored the variations of content, top user needs and how they could be visualized for the user to reduce the time it takes

to create a decision and to inform ML and AI to make decisions.

Initial ideas and issues to reproduce the problem are

explored and studied. This application was used in User Research studies and iterations of testing as the design was

developed.

Decision Support Engine Fact-Based Rules UI Design Suite

Decision Support Engine Fact-Based Rules

I modernized risk management by replacing a legacy CRM with a custom-built, rule-based engine. This tool utilizes weighted logic to deliver transparent, compliant decisions for fraud, safety, accounts, and trust evaluation.

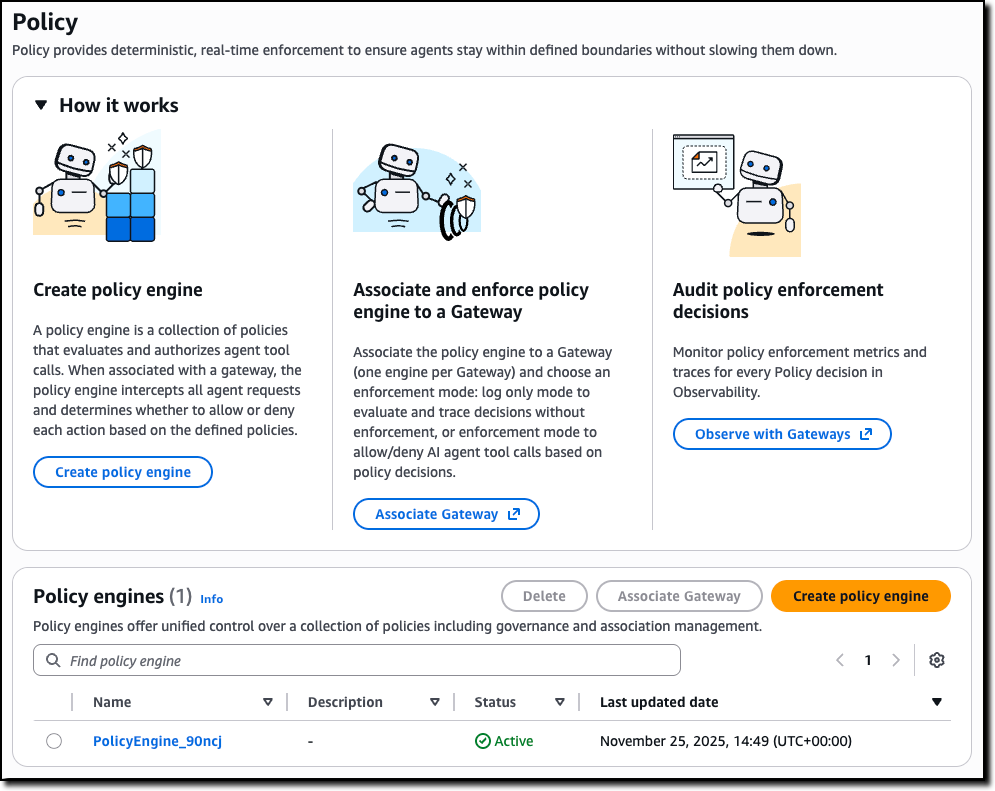

Utilizing AI in the Discovery: Decision Support Engine Fact-Based Rules

The Decision Support Engine adds quality evaluations and policy controls for deploying trusted AI agents

- Create data frames of the customer data from the data lake and rules repository.

- Read the customer and critical data elements from the data lake and the rules and rule reference data from the Aurora database.

- Distribute and run the inclusion or exclusion SQL rules in parallel against all of the customers to ensure every customer is evaluated against every rule.

- Write the results from these SQL execution to an ephemeral storage or S3 bucket to be able to run evaluations based on the inclusions and exclusion results. This step can be executed in memory depending on your data volume and performance considerations.

Under the hood, the rules engine will:

How the Decision Support Engine Works with a Gateway and AI

Decision Support Engine Fact-Based Rules

- Complexity: Depending on the complexity of the rules and rule categories, you may prefer to configure the complete SQL statements in the rules repository itself. The evaluation step takes into consideration all of the inclusions and exclusions for each customer. If a customer qualifies for inclusions, and depending on what exclusions apply, it will grant or disregard memberships.

- Numeric inclusions and exclusions: To implement more complex business rules, the inclusion and exclusion rules can be granted numeric weights like 1, 2, 3 in the rule configuration when you design the rules repository. It would be a good idea to space the rule weights with multiples of 10 like 10, 20, 30.

- Need to disregard: If there’s a need to disregard certain exclusions and instead override such exclusions when certain inclusion rules apply, these weights can be used in the evaluation step. Assign higher numeric weights to inclusion rules that override any exclusion rules. During evaluation, you can apply a sum function to all the weightages of each category; the aggregate of inclusion weights and exclusions weights can be used to grant or disregard memberships depending on whichever is higher.

Additional design considerations for the rules engine inclusions and exclusions modules:

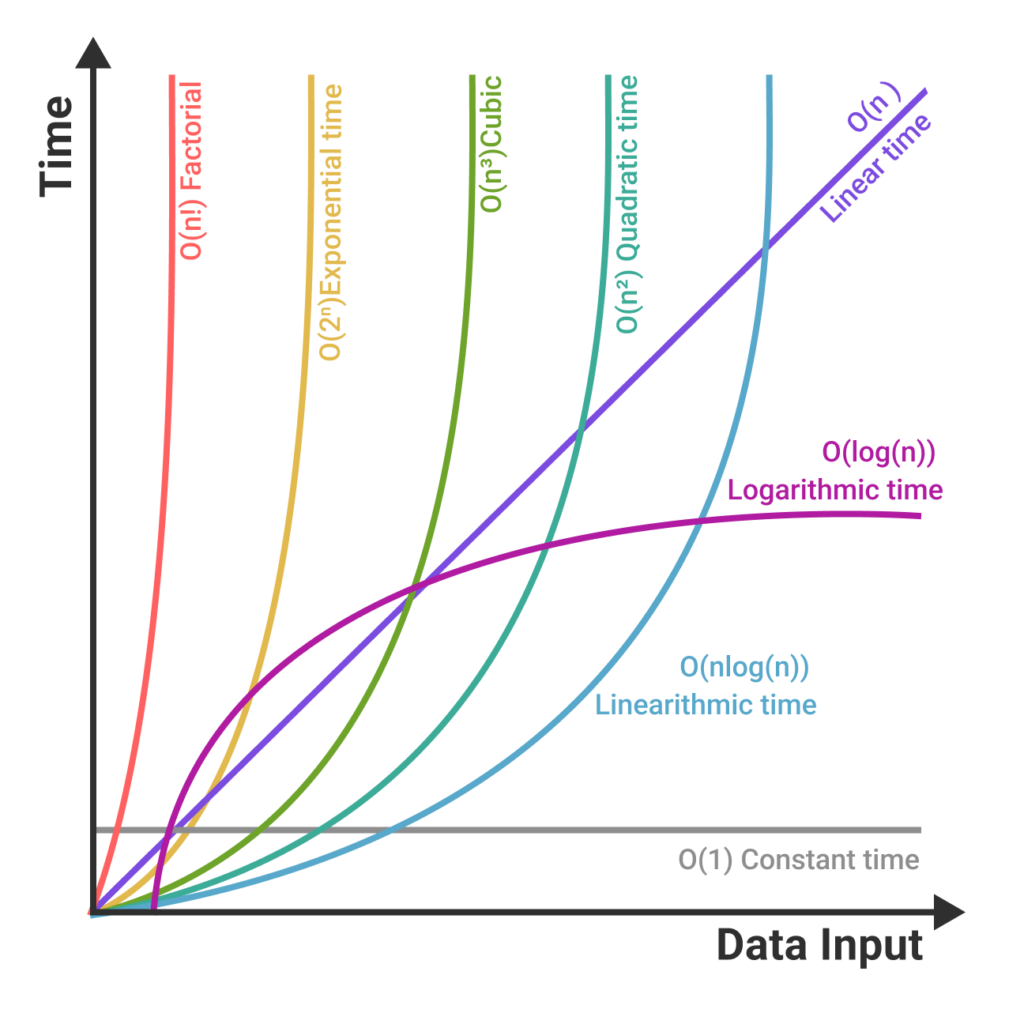

Decision Support Engine Fact-Based Rules Performance

The Challenge: Designing for Scale Fraud detection at Amazon-scale requires more than just smart logic; it requires extreme efficiency. I worked closely with engineering to understand and influence the optimization of the engine’s Time Complexity (minimizing latency for real-time approvals) and Space Complexity (managing the massive memory overhead required to cross-reference millions of data points). By designing with these SQL fundamentals in mind, we built a tool that supports rapid-fire decision-making without compromising system stability at scale. Legacy systems were brittal and prone to failure, costing multimillion dollars of loss in minutes in some cases.